Dans l'ère de l'intelligence artificielle et du calcul haute performance qui progresse rapidement, la bande passante de la mémoire est devenue un goulot d'étranglement critique qui limite la puissance de calcul - ce que l'industrie appelle souvent le problème du “mur de mémoire”. Imaginez la capacité de calcul du GPU comme une chaîne de montage d'une super-usine, alors que la mémoire traditionnelle ne fournit qu'un étroit “tuyau d'approvisionnement en matières premières”, laissant les ressources de calcul coûteuses au ralenti et en attente de données. C'est le principal défi auquel est confrontée la formation à l'IA aujourd'hui. La mémoire HBM4 (High Bandwidth Memory 4) est là pour faire sauter ce goulot d'étranglement une fois pour toutes, en fournissant l'épine dorsale de stockage essentielle pour l'explosion de calcul induite par l'IA.

Qu'est-ce que le HBM4 ?

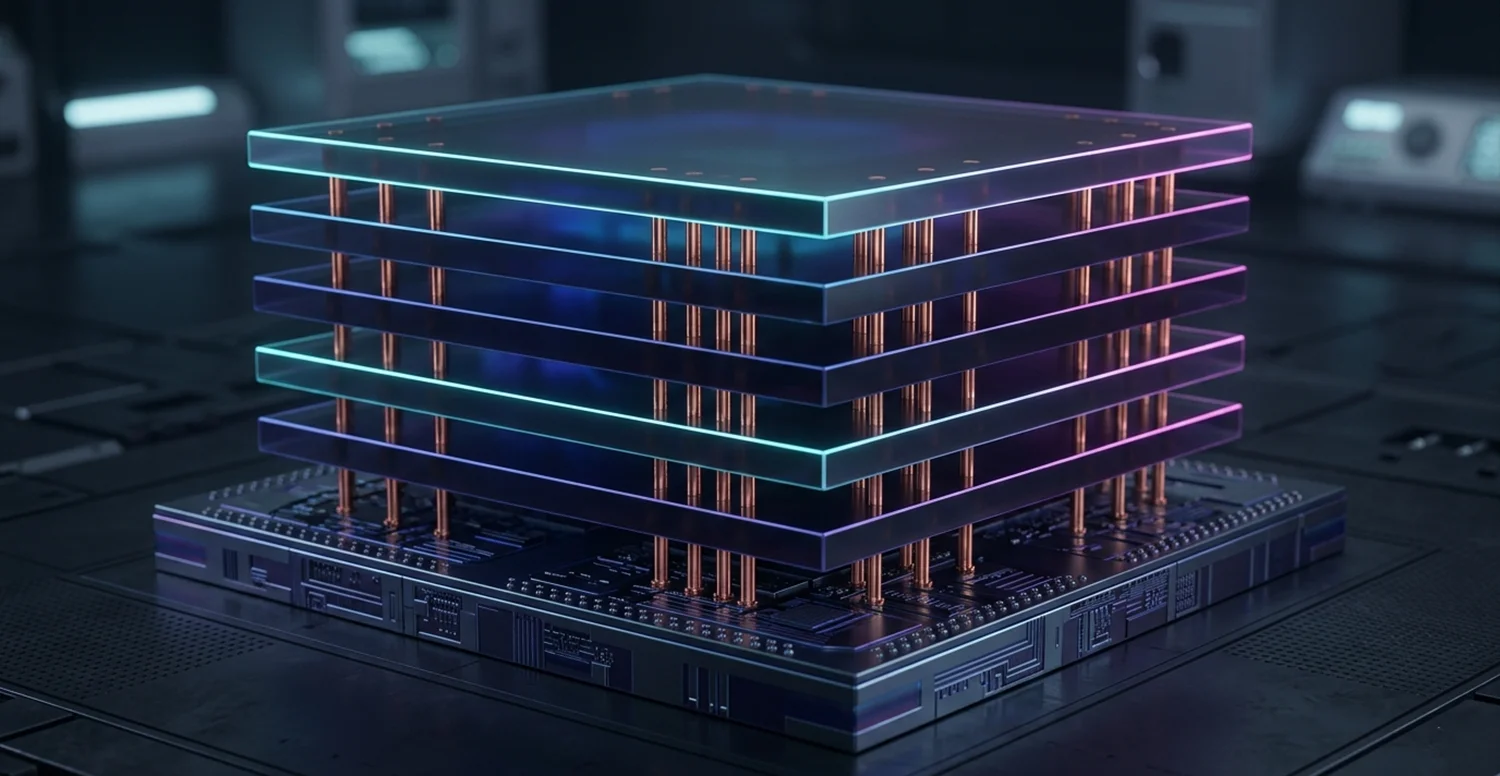

Mémoire à large bande passante est né pour résoudre le problème du “mur de mémoire” en augmentant la bande passante de la mémoire pour débloquer la puissance de calcul. Elle adopte une philosophie de conception complètement différente de la mémoire traditionnelle : empiler verticalement plusieurs puces DRAM et les interconnecter à grande vitesse à l'aide de la technologie TSV (Through-Silicon Via), ce qui permet d'obtenir une largeur de transfert de données massive dans un encombrement physique extrêmement réduit. Depuis la première génération de HBM en 2013 jusqu'à aujourd'hui, cette famille a évolué sur plus d'une décennie, et HBM4 est son dernier jalon.

HBM4 est la sixième génération de technologie de mémoire à large bande passante, officiellement publiée sous le nom de "HBM4". Norme JESD270-4 par le JEDEC en avril 2025. Succédant à HBM3/HBM3E, il est spécialement conçu pour la formation à l'IA, le calcul à haute performance et les GPU haut de gamme des centres de données. Il reprend l'architecture 3D stacked de la famille HBM, en empilant verticalement plusieurs puces DRAM et en les intégrant à une matrice de base logique pour obtenir une densité de bande passante extrêmement élevée et un emballage compact, ce qui lui a valu le surnom de “super grenier” pour le calcul de l'intelligence artificielle.

Qu'est-ce qui rend le HBM4 si puissant ?

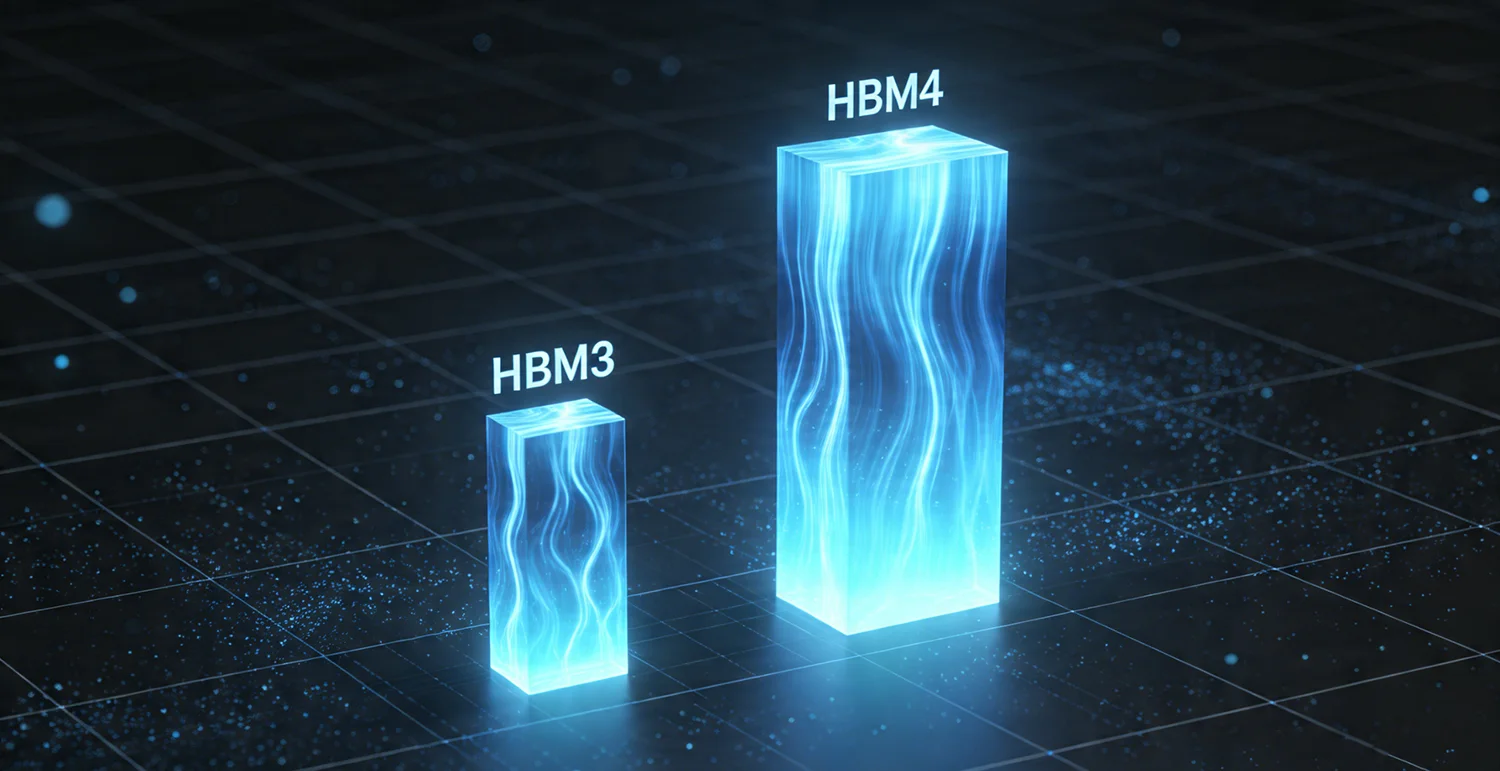

Par rapport à la génération précédente HBM3E, HBM4 offre un saut de performance considérable. Le tableau ci-dessous vous donne un aperçu des principaux changements :

| Spécifications | HBM3 | HBM4 | Amélioration |

|---|---|---|---|

| Largeur de l'interface | 1024 bits | 2048 bits | Doublé |

| Largeur de bande standard | ~819 GB/s | 2 TB/s | ~2.4× |

| Chaînes indépendantes | 16 | 32 | Doublé |

| Capacité maximale par pile | 24 GB (8-Hi) | 64 Go (16-Hi) | ~2.7× |

| Tension de fonctionnement | Fixe ~1,1V | VDDQ 0,7-0,9V, VDDC 1,0-1,05V | Plus flexible, plus efficace |

Voyons maintenant ce que ces chiffres signifient réellement.

Interface plus large, largeur de bande plus élevée

HBM4 double l'interface de données par pile de 1024 bits à 2048 bits. Qu'est-ce que cela signifie ? La mémoire DDR5 la plus avancée aujourd'hui a une largeur d'interface monocanal de seulement 64 bits. Cela signifie qu'une pile HBM4 a une largeur de bande équivalente à 32 canaux DDR5 fonctionnant simultanément. Avec une largeur d'interface doublée, la bande passante totale est automatiquement doublée, même avec le même débit de données. De plus, les produits des fournisseurs actuels fonctionnent souvent à des vitesses plus élevées, de sorte que la bande passante finale peut facilement dépasser 2 TB/s, et même atteindre plus de 3 TB/s.

Plus de canaux, plus de souplesse dans la programmation des données

Le nombre de canaux passe de 16 à 32, et chaque canal comprend deux pseudo-canaux. Les canaux peuvent être considérés comme des “voies” indépendantes à l'intérieur de la mémoire. Un plus grand nombre de canaux signifie que le système peut émettre plus de demandes d'accès à la mémoire simultanément sans interférer les unes avec les autres. Cela est particulièrement utile pour les opérations matricielles massivement parallèles de l'informatique de l'intelligence artificielle, car cela permet de réduire considérablement les conflits d'accès et d'améliorer la bande passante effective.

Plus grande capacité, permettant de contenir l'ensemble du modèle

En augmentant le nombre de couches de DRAM d'un maximum de 8 à 16, une seule pile de mémoire HBM4 peut atteindre jusqu'à 64 Go. Dans les produits actuels, un accélérateur d'IA intègre généralement 4 à 8 piles HBM, ce qui signifie que la capacité totale de mémoire peut facilement dépasser 256 Go, voire 512 Go. Pour les grands modèles à des milliers de milliards de paramètres, une telle capacité permet aux paramètres du modèle et aux résultats intermédiaires de résider entièrement dans la mémoire à grande vitesse, éliminant ainsi les transferts fréquents à partir de la VRAM plus lente ou de la mémoire du système.

Une tension plus faible, une meilleure efficacité énergétique

HBM4 introduit une gestion plus fine de la tension. La tension d'E/S VDDQ peut être réglée entre 0,7V et 0,9V, et la tension du cœur VDDC peut être sélectionnée entre 1,0V et 1,05V. Des tensions plus basses réduisent directement la consommation d'énergie. Selon les données du fournisseur, l'énergie par bit transféré de HBM4 est inférieure d'environ 40% à celle de HBM3E. Pour les grands centres de données, cela signifie des factures d'électricité moins élevées et des demandes de refroidissement réduites.

Nouvelle fonction de sécurité : DRFM

HBM4 ajoute également une fonction de fiabilité importante : la gestion du rafraîchissement dirigé (DRFM). Elle protège efficacement contre les attaques de type “Row-Hammer”, une vulnérabilité de sécurité où la lecture et l'écriture répétées et rapides de lignes de mémoire adjacentes provoquent des inversions de bits dans les lignes voisines. La DRFM identifie intelligemment et rafraîchit sélectivement ces rangées, ce qui améliore considérablement la sécurité de la mémoire et l'intégrité des données.

Quelles sont les principales avancées techniques du HBM4 ?

Collage hybride

Le collage hybride est considéré comme la prochaine solution révolutionnaire dans l'emballage des mémoires. La technologie traditionnelle des micro-bosses utilise des bosses métalliques à l'échelle du micron pour connecter les puces, avec un pas d'environ 10μm - une limitation physique qui empêche un empilement plus dense et une transmission plus rapide des signaux. Le collage hybride élimine entièrement ces bosses, en préparant les surfaces de cuivre de deux puces pour qu'elles soient atomiquement plates et propres, puis en les mettant en contact direct afin que les atomes de cuivre diffusent et fusionnent sous l'effet de la température et de la pression.

Selon les données de test publiées par Samsung, le collage hybride peut réduire le pas d'interconnexion puce à puce à moins de 10μm, augmentant la densité d'interconnexion de plusieurs fois à des dizaines de fois, tout en offrant une résistance plus faible, des chemins de signaux plus courts et une meilleure dissipation de la chaleur. Les données mesurées par Samsung montrent que le collage hybride bumpless peut augmenter la hauteur de la pile HBM d'un tiers et réduire la résistance thermique de 20%. Toutefois, comme l'équipement de collage hybride est coûteux (environ le double de celui des colleuses traditionnelles) et que le rendement de la production de masse doit encore être amélioré, cette technologie n'a pas encore été appliquée aux produits HBM4 actuellement produits en volume. Samsung a livré à ses clients des échantillons 16-Hi HBM basés sur le collage hybride, et l'adoption commerciale devrait commencer progressivement à partir de HBM4E (la version améliorée de HBM4).

Interface distribuée et architecture pseudo-canal

HBM4 adopte une conception avec 32 canaux totalement indépendants - deux fois plus que HBM3 - et chaque canal est équipé de 2 pseudo-canaux, supportant 32 modes DQ. L'avantage de cette architecture distribuée est qu'elle n'exige pas que tous les canaux fonctionnent de manière synchrone. Chaque canal peut traiter les demandes de données de manière indépendante, ce qui améliore considérablement l'efficacité de l'accès parallèle. Cette architecture est particulièrement adaptée aux opérations tensorielles et aux schémas d'accès aux données irréguliers dans le cadre de l'apprentissage de modèles d'IA.

Comparée à la conception monocanal de la mémoire traditionnelle, l'architecture multicanal de HBM4 revient à étendre une autoroute à voie unique en 32 autoroutes indépendantes à voies multiples, chacune capable de transmettre efficacement des données en même temps - ce qui élimine complètement les embouteillages de données et permet aux GPU d'utiliser plus pleinement leur puissance de calcul.

Conception à interface large et à faible consommation d'énergie

HBM4 utilise une stratégie “interface ultra-large + fréquence d'horloge relativement basse” pour atteindre une largeur de bande extrêmement élevée tout en maintenant une faible densité de puissance. Les mémoires traditionnelles augmentent souvent la bande passante en augmentant les fréquences d'horloge, ce qui entraîne une forte augmentation de la consommation d'énergie. HBM4 fait le contraire : avec un bus de données de 2048 bits de large, il fournit plusieurs fois la largeur de bande de la mémoire conventionnelle à des fréquences relativement modestes. Cette conception réduit l'énergie par bit du HBM4 de 30 à 40%, un avantage significatif dans la tendance à la réduction des coûts de l'IA et à l'amélioration de l'efficacité.

En outre, HBM4 prend en charge l'optimisation de la tension VDDQ spécifique au fournisseur (réglable entre 0,7V et 0,9V), ce qui améliore encore l'efficacité énergétique. Cela permet aux déploiements de centres de données à grande échelle de contrôler efficacement la puissance totale et de réduire les coûts d'exploitation. En même temps, HBM4 maintient la compatibilité ascendante avec les contrôleurs HBM3 - un seul contrôleur peut prendre en charge les deux générations de mémoire, ce qui réduit la barrière des mises à niveau des systèmes.

HBM4 Progrès et feuilles de route des trois géants

Samsung est le premier fabricant au monde à annoncer la production en masse de HBM4. Samsung Electronics a annoncé le 12 février 2026 qu'elle avait lancé la première production commerciale de masse de HBM4 et commencé les livraisons aux clients, en utilisant une matrice logique de 4 nm et une technologie d'empilement 12-Hi, offrant un débit de données de 11,7 Gbps et une bande passante de 3,3 TB/s, dépassant de loin la norme JEDEC de 8 Gbps et de 2 TB/s. Samsung prévoit d'introduire des échantillons HBM4E au cours du second semestre 2026 pour améliorer encore les performances, tout en développant également une version empilée 16-Hi qui étend la capacité par pile à 48 Go, ouvrant ainsi la voie aux accélérateurs d'IA de la prochaine génération.

SK Hynix progresse rapidement dans le domaine du HBM4. Selon sa feuille de route technologique, elle prévoit de lancer en 2026 un produit HBM4 empilé 16-Hi d'une capacité de 48 Go et d'une largeur d'interface unifiée portée à 2048 bits. Bien que l'entreprise investisse activement dans les technologies d'emballage de la prochaine génération, telles que le collage hybride, les échantillons 16-Hi qu'elle a présentés jusqu'à présent utilisent toujours sa technologie MR-MUF mature. SK Hynix prévoit d'augmenter la production en volume en 2026, en étroite collaboration avec des clients majeurs tels que NVIDIA et AMD.

Micron Technology a confirmé que sa mémoire HBM4 est entrée en production de masse au premier trimestre 2026, les premières livraisons étant des versions 12-Hi de 36 Go délivrant plus de 2,8 TB/s de bande passante mémoire. Le produit sera spécialement conçu pour la plate-forme Vera Rubin de NVIDIA afin de prendre en charge la formation à l'IA des centres de données de nouvelle génération. Cette stratégie de “ personnalisation à la demande ” positionne Micron favorablement dans des segments de clientèle spécifiques.

Comment le HBM4 va-t-il renforcer l'IA et le calcul haute performance ?

Accélérateurs d'IA de nouvelle génération

HBM4 est devenue la mémoire standard pour les GPU des centres de données de la prochaine génération. Les principaux fournisseurs de puces d'IA - NVIDIA, AMD, Intel - sont tous en train d'adopter HBM4 sur leurs dernières plateformes d'accélération. Par exemple, sur la plateforme Vera Rubin de NVIDIA, avec huit piles HBM4, la bande passante théorique de la mémoire peut atteindre 22 To/s, et avec une capacité de mémoire de départ de 288 Go, elle offre suffisamment d'espace et de canaux de données pour l'entraînement de grands modèles à des trillions de paramètres. La prochaine série Instinct MI400 d'AMD prévoit également des configurations HBM4 robustes : le modèle MI455X comprendra 12 piles HBM4, totalisant 432 Go de capacité et 19,6 TB/s de bande passante, ciblant les tâches d'entraînement et d'inférence d'IA à grande échelle à forte intensité de mémoire et de bande passante. En outre, le prochain accélérateur d'IA d'Intel, Jaguar Shores, adoptera également la technologie HBM4 - bien que les chiffres spécifiques de bande passante et de capacité n'aient pas été divulgués, l'adhésion à l'écosystème HBM4 est une orientation claire.

Permettre la formation de grands modèles sans contraintes de mémoire

L'apprentissage génératif de l'IA, en particulier pour les grands modèles de langage avec des centaines de milliards ou même des trillions de paramètres, est le scénario d'application central pour le HBM4. Ces modèles nécessitent le traitement simultané d'ensembles de paramètres et de données massifs, ce qui pose des exigences extrêmement élevées en matière de bande passante et de capacité de mémoire. Les 288 à 384 Go de mémoire par carte accélératrice fournis par HBM4 signifient qu'une seule carte peut contenir de grands paramètres de modèle et de longues fenêtres de contexte qui nécessitaient auparavant plusieurs cartes travaillant ensemble. Il n'est donc plus nécessaire de répartir fréquemment les données entre les cartes pendant la formation, ce qui évite les surcharges de communication et les pertes d'efficacité dues au partage des modèles, et raccourcit ainsi considérablement les cycles de formation. Dans le cadre d'un déploiement réel de services d'IA, HBM4 peut améliorer les performances d'inférence de grands modèles de plus de 69%.

Accélérer la recherche scientifique et la simulation

Dans le domaine du calcul à haute performance, HBM4 fournit une infrastructure critique pour le calcul scientifique qui nécessite un débit de données massif. Qu'il s'agisse de prévisions météorologiques, de simulation d'informatique quantique ou d'analyse de séquençage du génome, tous ces domaines s'appuient sur des systèmes de mémoire à large bande passante et à grande capacité. Prenons les prévisions météorologiques : les stations météorologiques mondiales, les satellites et les radars génèrent à chaque instant de vastes quantités de données en temps réel. Le HBM4 peut traiter ces flux de données rapidement, ce qui permet aux superordinateurs d'effectuer des calculs de modèles atmosphériques plus détaillés en moins de temps, améliorant ainsi la précision et la rapidité d'alerte des prévisions météorologiques extrêmes. Dans le domaine du séquençage du génome, le HBM4 peut comparer et analyser simultanément des millions de séquences génétiques, ce qui accélère l'identification des gènes liés aux maladies et des cibles des médicaments, permettant ainsi de gagner un temps précieux pour la mise au point de nouveaux médicaments.

Développement du graphisme haut de gamme et de la visualisation professionnelle

Bien que les cartes graphiques grand public utilisent aujourd'hui principalement la mémoire GDDR, la série HBM a toujours été un choix potentiel pour les stations de travail graphiques professionnelles et les cartes de jeu de haut niveau en raison de sa bande passante ultra-élevée et de sa faible consommation d'énergie. Avec la baisse progressive des coûts de production de masse de la mémoire HBM4, les utilisateurs ordinaires pourraient un jour bénéficier d'expériences de création de contenu plus fluides et plus efficaces dans des scénarios tels que les jeux 8K, le rendu en temps réel et l'édition vidéo. Pour les professionnels de la vidéo ultra-haute résolution et de la modélisation 3D complexe, la technologie HBM4 réduira considérablement les temps d'attente pour le rendu, rendant le processus créatif plus fluide et plus naturel.

HBM4, la sixième génération de technologie de mémoire à large bande passante, réalise un double saut en termes de bande passante et de capacité grâce à son interface ultra-large de 2048 bits, son architecture à 32 canaux et sa technologie de liaison hybride. Il s'agit d'une solution de mémoire essentielle pour surmonter le goulot d'étranglement que constitue le “mur de mémoire”. Non seulement elle fournit un support de stockage puissant pour la formation à l'IA, le calcul à haute performance et les GPU haut de gamme des centres de données, mais elle marque également le début d'une nouvelle ère où la technologie de la mémoire entre dans l'ère du collage hybride et de l'empilement 3D. Avec la commercialisation à grande échelle de HBM4 et la maturation continue de sa technologie, nous avons toutes les raisons de croire que la puissance de calcul de l'IA connaîtra une nouvelle poussée de croissance, débloquant davantage de technologies de pointe et de scénarios d'application, et apportant des changements considérables au développement de la société humaine.