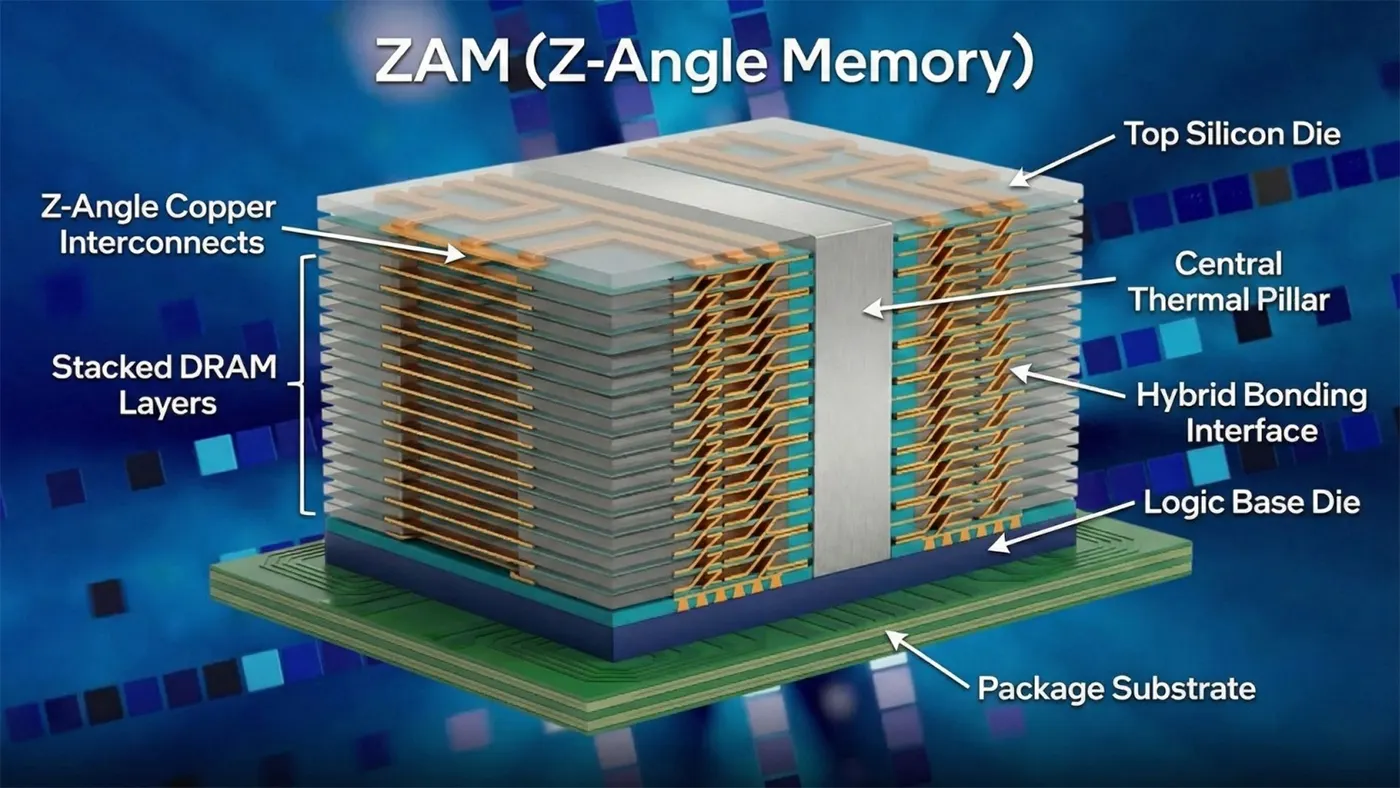

What Is Z-Angle Memory

Основные технические инновации

ZAM vs. HBM

| Metric | ZAM | HBM3e (Current) | HBM4 (Upcoming) |

|---|---|---|---|

| Capacity per Stack | До 512 ГБ | 24–36GB | 24–48GB |

| Max Stack Layers | 50+ layers | 12–16 layers | 16–20 layers |

| Потребляемая мощность | 40–50% lower than HBM3e | Baseline | ~20% lower than HBM3e |

| Interconnect Type | Diagonal Z-angle copper | Vertical TSVs | Vertical TSVs |

| Thermal Performance | Central thermal pillar; low hotspots | High hotspots at high layers | Moderate improvement |

| Target Use Case | Large AI training, HPC | Cloud AI inference | Mid-to-large AI workloads |

Key Advantages of ZAM

Development Background & Industry Partnerships

Real-World Use Cases

- Large-Scale AI Model Training. Massive per-stack capacity removes memory bottlenecks for trillion-parameter foundation models, allowing faster training and simpler cluster design.

- Cloud AI Inference at Scale. Lower power consumption reduces operational costs for hyperscale cloud providers running continuous inference workloads.

- High-Performance Computing. Scientific simulations, weather modeling, and financial modeling benefit from higher capacity and stable, low-latency memory access.

- CXL Memory Pooling. ZAM’s efficient stacking and high bandwidth make it a natural fit for compute express link (CXL) memory pooling, enabling flexible, shared memory resources in modern data centers.

- Edge AI & Autonomous Systems. Improved power efficiency supports AI deployments in power-constrained edge environments, from industrial automation to autonomous vehicles.

Current Status & Future Timeline

- February 2026: First prototype demonstration at Intel Connection Japan, focused on thermal management.

- 2027: Engineering samples and test chips expected to be released to hardware partners.

- 2030: Target mass commercial deployment for AI data centers and HPC systems.

Z-Angle Memory represents a paradigm shift in stacked DRAM design. By replacing vertical TSVs with a diagonal, Z-shaped interconnect topology, it tackles HBM’s most persistent constraints. But the competitive landscape for AI memory is dynamic. Rival technologies, such as Samsung’s recently announced zHBM, are also targeting the post-HBM4 era with aggressive performance claims. Furthermore, the successful commercialization of any new memory architecture depends on achieving high manufacturing yield, competitive cost structures, and—critically—adoption by major AI accelerator and system vendors. Therefore, while ZAM presents a compelling blueprint, its journey from prototype to industry standard will be contingent on overcoming these real-world engineering and ecosystem challenges.