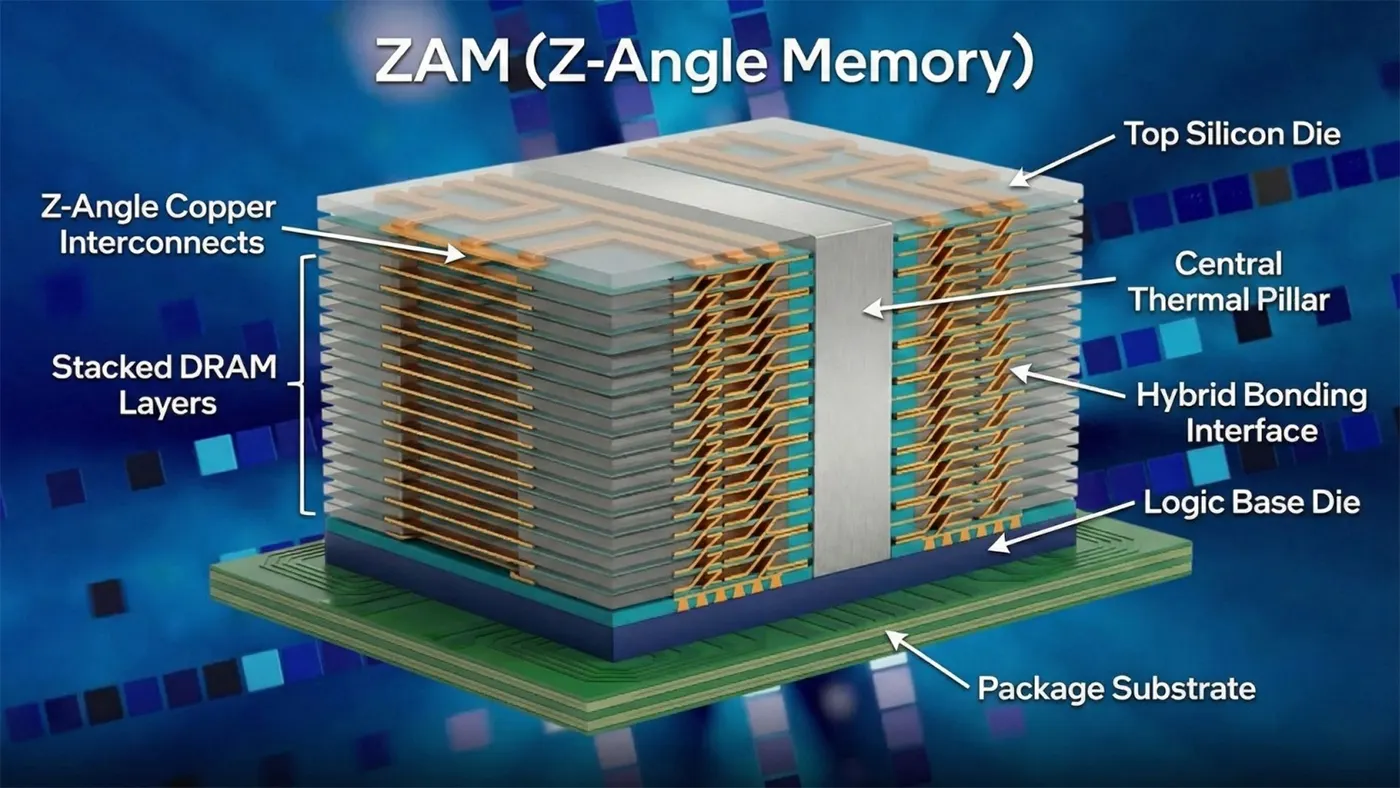

Qu'est-ce que la mémoire de l'angle Z ?

Innovations techniques fondamentales

ZAM vs. HBM

| Métrique | ZAM | HBM3e (actuel) | HBM4 (à venir) |

|---|---|---|---|

| Capacité par pile | Jusqu'à 512 Go | 24-36GB | 24-48GB |

| Nombre maximal de couches d'empilage | 50+ couches | 12-16 couches | 16-20 couches |

| Consommation électrique | 40-50% inférieur à HBM3e | Base de référence | ~20% inférieur à HBM3e |

| Type d'interconnexion | Angle Z diagonal cuivre | TSV verticaux | TSV verticaux |

| Performance thermique | Pilier thermique central ; points chauds faibles | Points chauds à haute altitude | Amélioration modérée |

| Cas d'utilisation cible | Formation à l'IA à grande échelle, HPC | Inférence de l'IA dans le nuage | Charges de travail d'IA de taille moyenne à grande |

Principaux avantages de ZAM

Historique du développement et partenariats industriels

Cas d'utilisation dans le monde réel

- Formation de modèles d'IA à grande échelle. La capacité massive par pile élimine les goulets d'étranglement de la mémoire pour les modèles de fondation à des billions de paramètres, ce qui permet une formation plus rapide et une conception plus simple des grappes.

- Inférence de l'IA en nuage à l'échelle. La baisse de la consommation d'énergie réduit les coûts d'exploitation pour les fournisseurs de cloud à grande échelle qui exécutent des charges de travail d'inférence en continu.

- Calcul à haute performance. Les simulations scientifiques, la modélisation météorologique et la modélisation financière bénéficient d'une plus grande capacité et d'un accès à la mémoire stable et à faible latence.

- Mise en commun de la mémoire CXL. L'empilement efficace et la bande passante élevée du ZAM en font une solution naturelle pour la mise en commun de la mémoire CXL (compute express link), ce qui permet de disposer de ressources de mémoire flexibles et partagées dans les centres de données modernes.

- IA de pointe et systèmes autonomes. L'amélioration de l'efficacité énergétique favorise les déploiements de l'IA dans les environnements périphériques à alimentation limitée, de l'automatisation industrielle aux véhicules autonomes.

Situation actuelle et calendrier futur

- février 2026: Premier prototype de démonstration à Intel Connection Japan, axé sur la gestion thermique.

- 2027: Des échantillons d'ingénierie et des puces d'essai devraient être mis à la disposition des partenaires matériels.

- 2030: Objectif de déploiement commercial de masse pour les centres de données d'IA et les systèmes HPC.

La mémoire à angle Z représente un changement de paradigme dans la conception des DRAM empilées. En remplaçant les TSV verticaux par une topologie d'interconnexion diagonale en forme de Z, elle s'attaque aux contraintes les plus persistantes de la mémoire HBM. Mais le paysage concurrentiel de la mémoire AI est dynamique. Des technologies concurrentes, telles que le zHBM récemment annoncé par Samsung, visent également l'ère post-HBM4 avec des revendications agressives en matière de performances. En outre, la commercialisation réussie de toute nouvelle architecture de mémoire dépend de l'obtention d'un rendement de fabrication élevé, de structures de coûts compétitives et, surtout, de l'adoption par les principaux fournisseurs d'accélérateurs et de systèmes d'IA. Par conséquent, bien que le ZAM présente un schéma directeur convaincant, son passage du stade de prototype à celui de norme industrielle dépendra de sa capacité à surmonter ces défis réels en matière d'ingénierie et d'écosystème.