On March 24, 2026, Google Research officially unveiled TurboQuant – a disruptive AI compression technology that compresses the key-value cache (KV Cache) used during large language model inference down to 3-bit precision. This achieves a 6x reduction in memory usage and up to an 8x increase in inference speed, all without any loss in model accuracy. The announcement triggered immediate volatility in the global memory chip market, with Micron Technology’s stock price plummeting and major players like Samsung and SK Hynix also suffering, collectively losing over $90 billion in market value. What makes this technology so powerful? Will it truly disrupt the storage industry? How will storage products like SSDs, DDR, and HBM evolve?

What is TurboQuant?

TurboQuant is a training-free, data-unbiased online vector quantization algorithm developed by Google Research. It is specifically designed to aggressively compress the key-value cache (KV Cache) during large language model inference.

The KV Cache is a temporary data structure that stores context information during model inference. It grows continuously with longer conversations, becoming a critical bottleneck that limits a model’s ability to handle long text sequences. Traditional compression methods often require model retraining, large calibration datasets, or additional storage for quantization parameters. TurboQuant’s breakthrough lies in its ability to achieve lossless compression from 16/32 bits down to 3 bits without any model adjustments, training data, or extra memory overhead – a true “plug-and-play” solution.

Two-Stage Compression Architecture

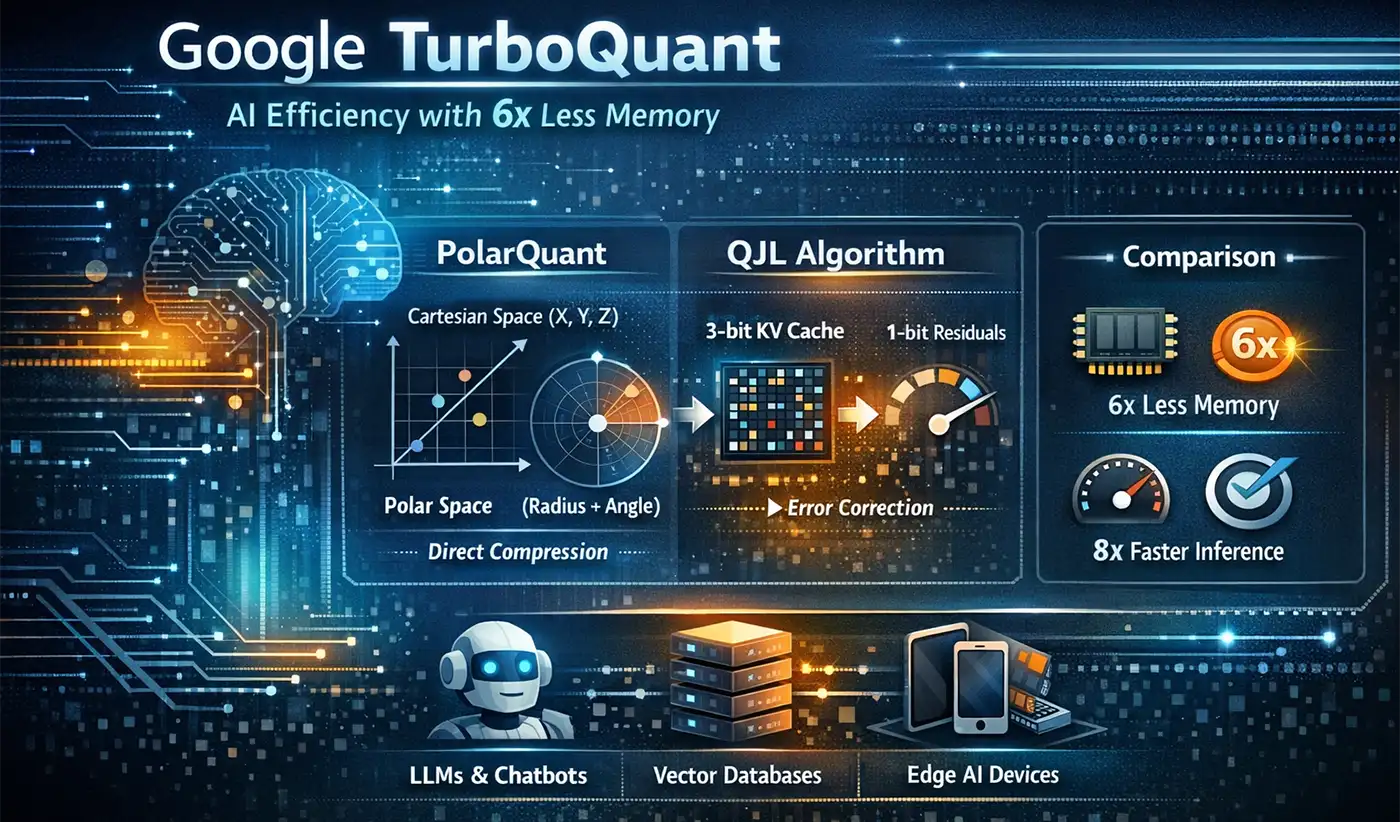

TurboQuant’s core innovation is its two-stage compression framework, which uses mathematical transformations rather than brute-force quantization to achieve an ideal balance of efficiency and accuracy:

PolarQuant: This is the main compression stage, which transforms high-dimensional vectors from Cartesian to polar coordinates. It first applies a random rotation to the input vectors to make the data distribution more uniform. It then decomposes each vector into radius (representing magnitude) and angle (representing semantic direction), quantizing only the angle. This process completely eliminates the need to store normalization parameters required by traditional methods.

QJL (Quantized Johnson-Lindenstrauss Transform): This is the residual correction stage. It uses 1-bit (sign bit) to apply an unbiased correction to the small errors introduced during the PolarQuant stage, ensuring that attention calculation accuracy remains uncompromised. This step solves the problem of error accumulation found in traditional compression methods, making zero precision loss theoretically possible.

This combination of “aggressive main compression + unbiased residual correction” allows TurboQuant to achieve performance at 3-bit precision that matches or even exceeds full-precision baselines, a fact validated by standard benchmarks like LongBench.

Key Features and Advantages

TurboQuant stands out among compression techniques due to four core advantages:

No Training or Fine-tuning Required: It can be applied directly to existing models (Llama, Mistral, Gemma, Gemini, etc.) without any adjustments or retraining, allowing for immediate deployment.

Data Unbiased: Its performance is independent of input data distribution, working effectively on all types of text, code, and image data without needing scenario-specific optimization.

Zero Overhead: It requires no additional storage for quantization parameters, normalization factors, etc., a stark contrast to traditional methods.

Theoretically Optimal: It offers mathematically near-optimal distortion guarantees, providing reliable performance predictability for large-scale deployment.

Cloud Over the Halo: A Brief Note on Academic Controversy

Alongside the market shockwaves caused by TurboQuant, an academic dispute has emerged. On March 27, Jianyang Gao, a postdoctoral fellow at ETH Zurich, publicly alleged that TurboQuant’s core methodology is highly similar to RaBitQ, an algorithm he published in 2024 at SIGMOD. Gao pointed out that the Google team’s paper avoided discussing methodological similarities, disparaged RaBitQ’s theoretical results as “suboptimal” without justification, and used unfair experimental comparisons (testing RaBitQ on a single-core CPU while testing TurboQuant on an A100 GPU).

According to Gao, these issues were communicated to the Google team via email before the paper’s release. While the Google team acknowledged some issues, they reportedly only promised to make corrections after the conference and denied the technical similarities. As of March 31, the RaBitQ team has posted a public comment on ICLR OpenReview and filed a formal complaint with the ICLR conference ethics committee. This controversy serves as a reminder: TurboQuant’s technical value still requires time to be fully validated, and the academic conduct issues involved are equally noteworthy.

Potential Impact on the Storage Industry

A Rational Look at Market Reaction

The sharp decline in storage chip stocks following TurboQuant’s announcement was more an overreaction driven by market sentiment than a rational assessment. To understand the true impact, it’s crucial first to define TurboQuant’s scope of influence:

Only Affects Inference: It has no impact on the model training process, which is the core demand scenario for high-end memory like HBM.

Only Compresses the KV Cache: Model weights, activations, and other core data are unaffected. These represent the primary consumers of storage resources.

The Paradox of Efficiency Gains: Historical experience suggests that improvements in computational efficiency often lead to larger-scale applications, potentially increasing overall storage demand rather than decreasing it (the Jevons paradox).

Potential Impacts on SSD, DDR, and HBM

TurboQuant may have a dual impact DDR memory. On one hand, it reduces reliance on HBM by enabling the KV Cache to be stored more cost-effectively in DDR5/DDR6 instead of requiring expensive HBM. This creates new opportunities for high-bandwidth DDR5-8800+ and future DDR6, positioning them as a cost-effective alternative to HBM in AI servers. On the other hand, TurboQuant accelerates the adoption of CXL memory expansion technology. By pooling DDR memory via CXL, AI servers can allocate memory resources more flexibly to handle inference tasks of varying sizes, further enhancing DDR utilization efficiency and market demand.

Contrary to market concerns, TurboQuant is likely a significant positive development for SSDs:

Long Context Overflow Storage: When KV Cache exceeds memory capacity, low-latency, high-endurance SSDs (like pSLC mode, NVMe 4.0/5.0) become ideal secondary cache, significantly boosting demand for the performance and capacity of enterprise-grade SSDs.

Vector Database Expansion: The increased adoption of Retrieval-Augmented Generation (RAG) systems, driven by TurboQuant, will directly fuel the growth of vector databases, which rely heavily on high-performance SSDs for their underlying storage.

Edge AI Deployment: TurboQuant makes it possible to run AI models on consumer-grade devices, expanding the market for client-side SSDs, particularly increasing demand for low-power, high-performance M.2 SSDs.

Market panic regarding HBM appears overdone:

Clear Distinction Between Training and Inference: TurboQuant only affects the KV Cache during inference. The bandwidth demands for model training on HBM remain undiminished; HBM remains an essential requirement for training ultra-large-scale models.

Model Weight Storage Unaffected: Model weights, which account for over 90% of AI memory consumption, are not compressed by TurboQuant. HBM’s role as the primary medium for storing these weights remains secure.

Hybrid Architecture Optimization: TurboQuant allows HBM resources to be allocated more efficiently to critical computing tasks, promoting the development of hybrid storage architectures combining HBM, DDR, and SSD, rather than simple replacement.

Potential New Paradigm for AI Infrastructure

TurboQuant’s real value lies not in “eliminating” a specific type of storage, but in reshaping the storage tiering architecture of AI infrastructure, driving the creation of a more efficient and economical memory-storage hierarchy.

A New Order of Intelligent Data Flow

Future AI server storage architectures are likely to feature a clear three-tier pyramid:

Top Tier – HBM: Responsible for storing core computational data like model weights and activations, meeting the bandwidth-intensive demands of training and inference tasks.

Middle Tier – DDR: Acts as the primary carrier for the KV Cache. Benefiting from TurboQuant’s compression efficiency, DDR5/DDR6 will become the workhorse memory for inference scenarios.

Bottom Tier – SSD: Handles long context overflow, vector databases, and model checkpoints. Low-latency, high-endurance enterprise SSDs will encounter new growth opportunities.

The core of this tiered architecture is intelligent data placement – dynamically moving data between tiers based on access frequency, latency requirements, and storage cost to achieve the optimal balance of performance and cost.

The Rise of Software-Defined Storage

TurboQuant may accelerate the adoption of Software-Defined Storage (SDS) in AI, particularly in the following areas:

Memory Management Systems: Management software that can monitor KV Cache size in real-time and intelligently decide whether to retain data in HBM, DDR, or overflow it to SSDs will become a standard component of AI infrastructure.

CXL Memory Pooling: Pooling DDR memory resources from multiple servers via the CXL protocol will provide elastically scalable memory resources for AI clusters, further reducing the HBM capacity requirement per individual server.

Compression-Aware Storage: Storage devices will begin to natively support compression algorithms like TurboQuant, enabling fast data compression and decompression at the hardware level to improve overall system efficiency.

The release of TurboQuant is not an omen of doom for the storage industry, but rather a new starting point for deeper integration between storage and AI. It will not simply “eliminate” a certain type of storage product. Instead, through a revolutionary breakthrough in compression technology, it will drive the storage industry towards greater efficiency and intelligence. This means future AI services will be capable of handling longer texts, providing more precise answers, while potentially reducing hardware costs. True technological revolution is never about simple replacement, but about achieving a leap in resource utilization efficiency through innovation, thereby opening doors to broader applications.